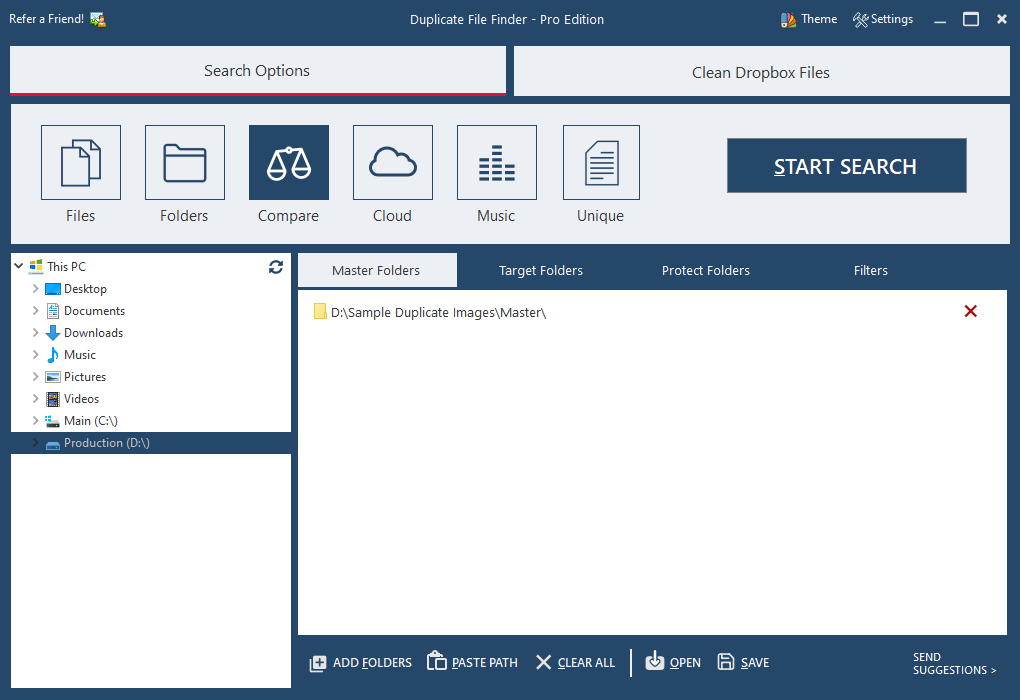

Then simply subscribe to easy-to-use reports that list duplicates, stale data and large files and get them automatically by email, so you can continuously spot and remediate issues as they arise and keep your storage orderly and efficient. Ditch manual scripting and analyze your storage in mere minutes. Just run the code provided above, being sure to specify the path to the folders you’re interested in.Īlternatively, you might try Netwrix Auditor for Windows File Servers. There is nothing vital in the folder, but it is useful to have those files, so I want to ensure I have a good copy of the folder. A simple Windows PowerShell script can help you complete this tedious task faster. This folder is shown in the following figure. To avoid wasting space and driving up storage costs, you have to analyze your file structure, find duplicate files and remove them. Duplicate files are often the result of users’ mistakes, such as double copy actions or incorrect folder transfers.

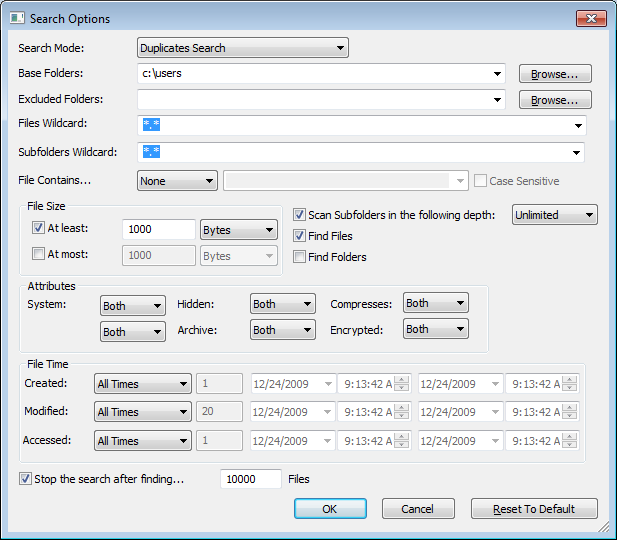

Documents, photos, backups and other can quickly eat up your shared file resources - especially if you have a lot of duplicates. If ($MatchingFiles.Count -gt Review the results:Īnyone who manages a file storage has to keep track of the size of files to ensure there is always enough free space. If ((fc.exe /A $SourceFile.FullName $TargetFile.FullName) -contains "FC: no differences encountered") Write-Verbose "Matching $($SourceFile.FullName) and $($TargetFile.FullName)" Select-Object -ExpandProperty File) -contains $TargetFile.FullName)) If (($SourceFile.FullName -ne $TargetFile.FullName) -and !(($MatchedSourceFiles | Write-Progress -Activity "Processing Files" -status "Processing File $Count / $TotalFiles" -PercentComplete ($Count / $TotalFiles * |Where-Object $Files=gci -File -Recurse -path $Path | Select-Object -property ($SourceFile in $Files) The check for duplicate files will happen. $Path = '\\PDC\Shared\Accounting' #define path to folders to find duplicate files The script requires at least an argument which is the path of the directory you wish to check in for duplicate files. Open the PowerShell ISE → Run the following script, adjusting the directory path:.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed